An average of 37,000 fires on manufacturing and industrial properties are reported each year in the United States according to the National Fire Protection Association. Tragically, these fires result in well over a thousand injuries and dozens of fatalities annually. The first priority of rescue crews during building fires is to get people who are either trapped or incapacitated out of harm’s way before it is too late. This can be quite a challenge in the heat of the moment, especially in large commercial or industrial spaces with many rooms and complex floor plans. Fires often move very fast, and time spent searching a building without specific information about where to look is not an efficient method of finding those in need of rescue.

Problems like this are just begging for a solution, and since machine learning is quite good at detecting people, I started to think about ways that this technology could be used to assist first responders in their search and rescue efforts. I ultimately decided to build a smart smoke alarm that jumps into action when it detects smoke. Whereas a traditional smoke detector sounds its alarm and calls it a day, my device jumps into action at that point by activating a thermal camera that can see through darkness and smoke. The measurements captured by the thermal camera are classified using a neural network developed with Edge Impulse that can recognize the presence of people. If people are detected, their location is reported wirelessly to a remote database that could be accessed by rescue crews to help focus their search.

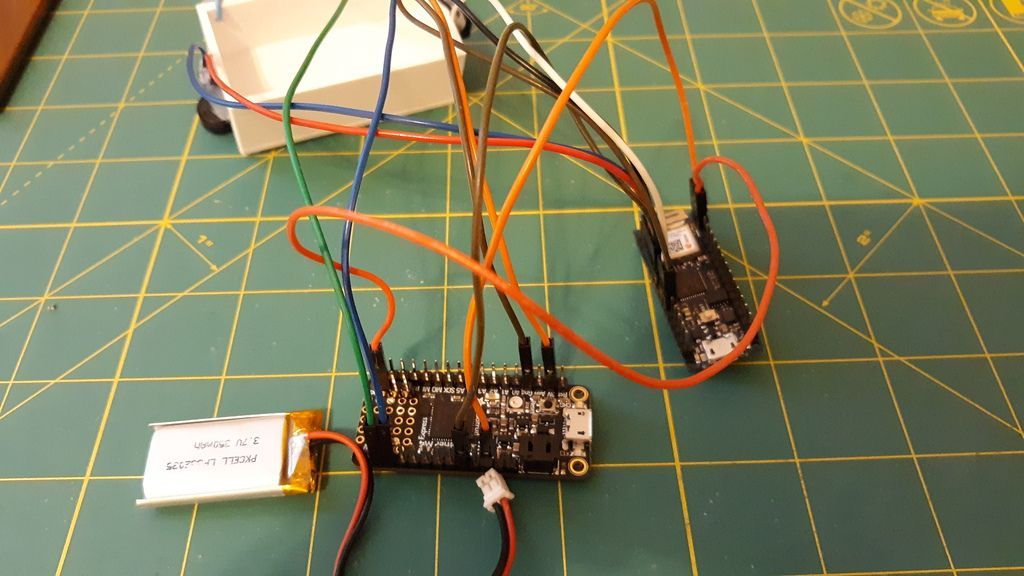

To build the prototype, I relied on a pair of microcontroller development boards. First, an Adafruit Feather M4 Express was selected for its Arm Cortex-M4 MCU running at 120 MHz and 192 KB of RAM, which made it ideal for running the machine learning classifier. This board was also responsible for capturing measurements from the thermal camera. The second development board is an Arduino Nano 33 IoT, and this one was given the role of acting as a simulated smoke detector and handling wireless communications. Rather than build an actual smoke detector (I did not want to have to start a fire to test it!) I wired a push button to the Nano 33 IoT to trigger an audible alarm via a piezo buzzer. This also started the image capture and classification process. In the event that a person was detected by this process during an active smoke alarm, the Nano 33 IoT would send the location information and timestamp to a remote web API that stores it in a database. An Adafruit MLX90640 24x32 IR Thermal Camera, LiPo battery, and a 3D-printed case rounded out the hardware components for the device.

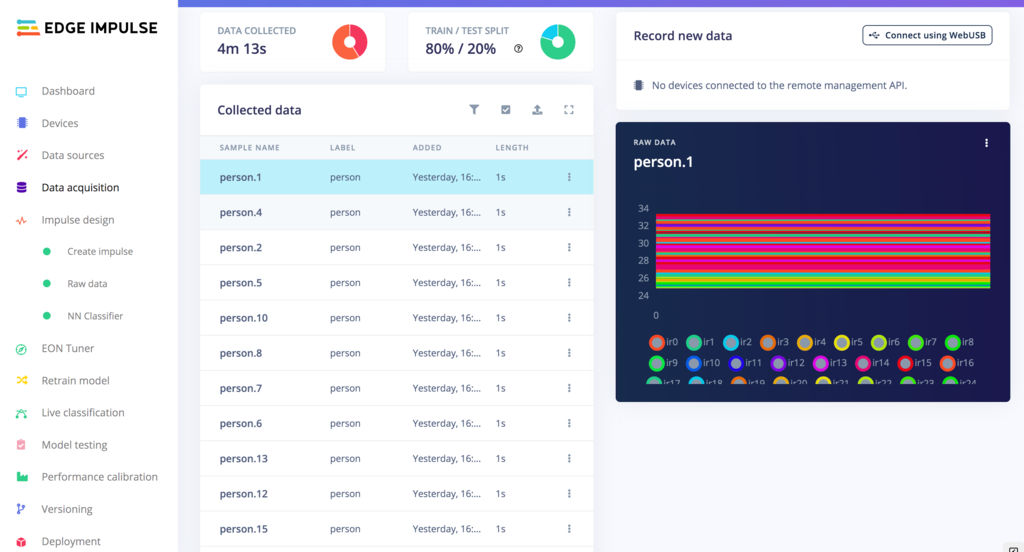

For the classifier to learn how to recognize the thermal appearance of people, I needed to put together a labeled dataset. I wrote an Arduino sketch to collect measurements from the thermal camera under two conditions — when a person was present, and when the room was empty. I took many thermal images of myself standing, sitting, walking, and otherwise moving about the room for the “person” class, and the “empty” class is self-explanatory. In total, I collected 189 “person” images, and 130 “empty” images, which I then transformed to CSV files with a simple Python script before uploading them to my Edge Impulse project. The data acquisition tool automatically assigned labels based on the file names, and also split the data into training and testing sets.

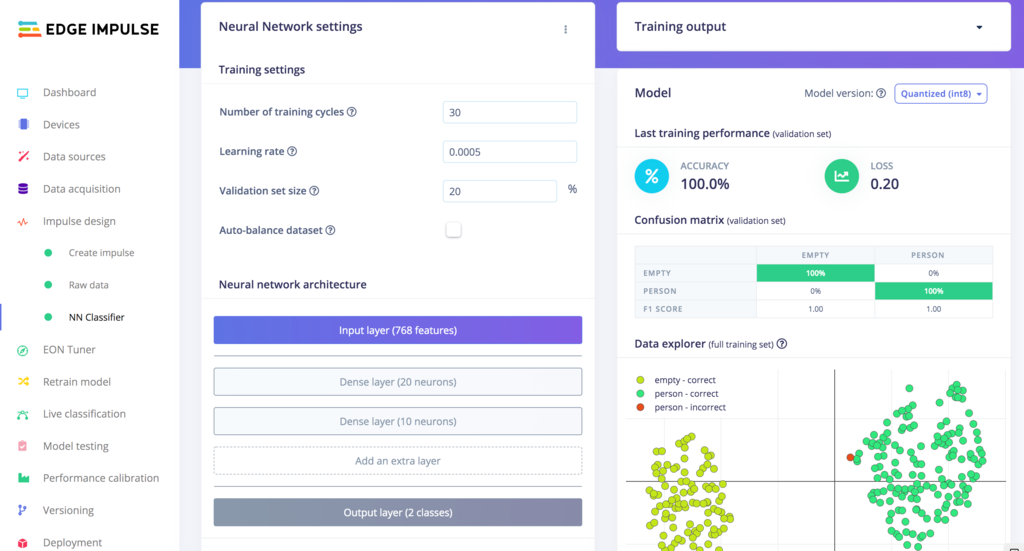

Designing the machine learning model in Edge Impulse Studio was the simplest part of the entire project. I created an impulse that simply forwards the raw thermal measurements into a neural network classifier block. I kept the default neural network layers and hyperparameters, then clicked the “Start training” button to begin teaching the model to distinguish the appearance of people. In less than a minute the training was complete, and the classification accuracy was reported as being at 100% right off the bat. That sounded too good to be true, so I used the model testing tool as a secondary validation that uses 20% of the uploaded data that was not included in the training process. That showed an average classification accuracy of 96.88%, confirming that the model was working very well. There ws really no need to improve on this for a proof of concept, so I moved on to loading this model onto my hardware.

Edge Impulse offers many options for deployment, but in my case the best option was the "Arduino library" download. This packaged up the entire classification pipeline as a compressed archive that I could import into Arduino IDE, then modify as needed to add my own logic (like to communicate with the Nano 33 IoT to send messages over Wi-Fi, for example). After uploading this sketch to the Feather M4 Express, and also the simulated smoke detector sketch to the Nano 33 IoT, I was able to install the completed device near the ceiling of my home office, looking down at an angle where it had a view of the entire room.

This device worked surprisingly well in my real world testing. In dozens of tests I did not have a single false positive or false negative. While my testing was done in my relatively small home office, I expect this method would scale up to cover a large office space, factory floor, or warehouse with similar accuracy. To cover a larger area a higher resolution thermal camera than the 24x32 pixel model used in this proof of concept would be needed, however.

Discover just how easy it can be to innovate with Edge Impulse by taking a peek at my public Edge Impulse project. You can also find some more details, and the source code, on the project documentation page.

Want to see Edge Impulse in action? Schedule a demo today.