I’m Milan Ferus-Comelo, one of the 2026 Edge Impulse Campus Ambassadors, and from March 10–12, I had the chance to attend embedded world 2026 in Nuremberg, the largest gathering of the global embedded community. Spread across seven massive exhibition halls, the event showcased everything from cutting-edge hardware to real-world demos. But beyond the scale, one shift stood out clearly: edge AI is redefining how embedded systems are built and deployed.

Edge AI is no longer a side conversation

In previous years I attended the fair, edge AI often felt like a niche tucked between traditional embedded workflows and felt like something experimental, promising, but not yet central.

This year, it was everywhere.

From industrial automation booths to tiny sensor startups, the narrative had shifted: AI is moving from the cloud into the device itself. Conversations weren’t about if edge AI makes sense, but how fast teams can deploy it in production.

What stood out most was how practical everything has become. Instead of abstract demos, companies showed real systems: vision-based quality inspection, predictive maintenance on-device, and fully offline human-machine interfaces. Latency, privacy, and reliability are driving AI to the edge.

On Tuesday, March 10th, during the opening night of the conference, I attended the IoT Stars event and to hear industry leaders speak candidly about the hurdles and breakthroughs we are facing. I got to listen to some incredible panel talks featuring experts from Edge Impulse, Blues, Avnet, and STM. A massive highlight of the night was hearing directly from the CPO of Arduino, who took the stage to discuss their vision for the future of hardware and edge computing.

The Launch of the Arduino VENTUNO Q

That conversation at IoT Stars tied perfectly into one of my personal highlights and the biggest hardware announcements of the entire conference: the official launch of the Arduino VENTUNO Q, making a statement about where the ecosystem is heading.

The VENTUNO Q combines a high-performance Qualcomm Dragonwing processor with a real-time microcontroller in what Arduino calls a “dual-brain” architecture, enabling systems to perceive, decide, and act on a single board.

With up to 40 TOPS of AI compute, support for local LLMs, and tight integration with tools like Edge Impulse, it’s clear that the barrier between embedded development and advanced AI is collapsing.

Alongside the VENTUNO Q, the new Arduino UNO Q board, with a similar architecture, was also making waves. Over at the Qualcomm booth, I got to see the UNO Q being used for high-speed tracking of slot cars racing around the track using Edge Impulse’s FOMO (Faster Objects, More Objects) algorithm.

At Edge Impulse, we design our platform to integrate seamlessly with hardware exactly like this. By deploying optimized C++ models directly to these boards, developers can build smart devices in a matter of hours. If you want to get your hands dirty with this new hardware right now, you have to check out the Invent the Future with Arduino UNO Q and App Lab Hackathon.

(Curious how the deployment process works? Check out our Edge Impulse documentation on deploying to the UNO Q to see how easy it is).

Student Day: The next generation is already here

As an Edge Impulse Campus Ambassador, my absolute favorite part of the week was Thursday's Student Day. Over 800 students from colleges and universities across Europe flooded the convention center to connect with the industry.

I spoke with students who were already building end-to-end ML pipelines on microcontrollers, others experimenting with TinyML for the first time, and many who were trying to bridge the gap between theory-heavy coursework and real-world deployment. One conversation that really stuck with me was with a group of engineering students from Munich. They were building a solar-powered environmental monitor for a university project but were struggling with the massive power draw of constantly sending raw sensor data to the cloud. We ended up discussing how running a small classification model directly on the device could help reduce that load, only transmitting data when something unusual is detected. It was a great exchange, and you could see how that approach opened up a new way of thinking about their design.

Seeing the enthusiasm of the next generation of engineers was incredible. The challenges of tomorrow, from ethical AI deployment to complex system architectures, will be solved by the students who were walking the floor that day. Bridging that gap between the classroom and the industry, and getting students hyped about ML, is exactly why I love being a Campus Ambassador.

A more mature ecosystem

What I appreciated a lot this year was how connected the ecosystem has become.

Hardware vendors are no longer operating in isolation. Tooling platforms, silicon providers, and developer ecosystems are starting to align:

- unified development environments

- better support for on-device ML workflows

- tighter integration between hardware and AI tooling

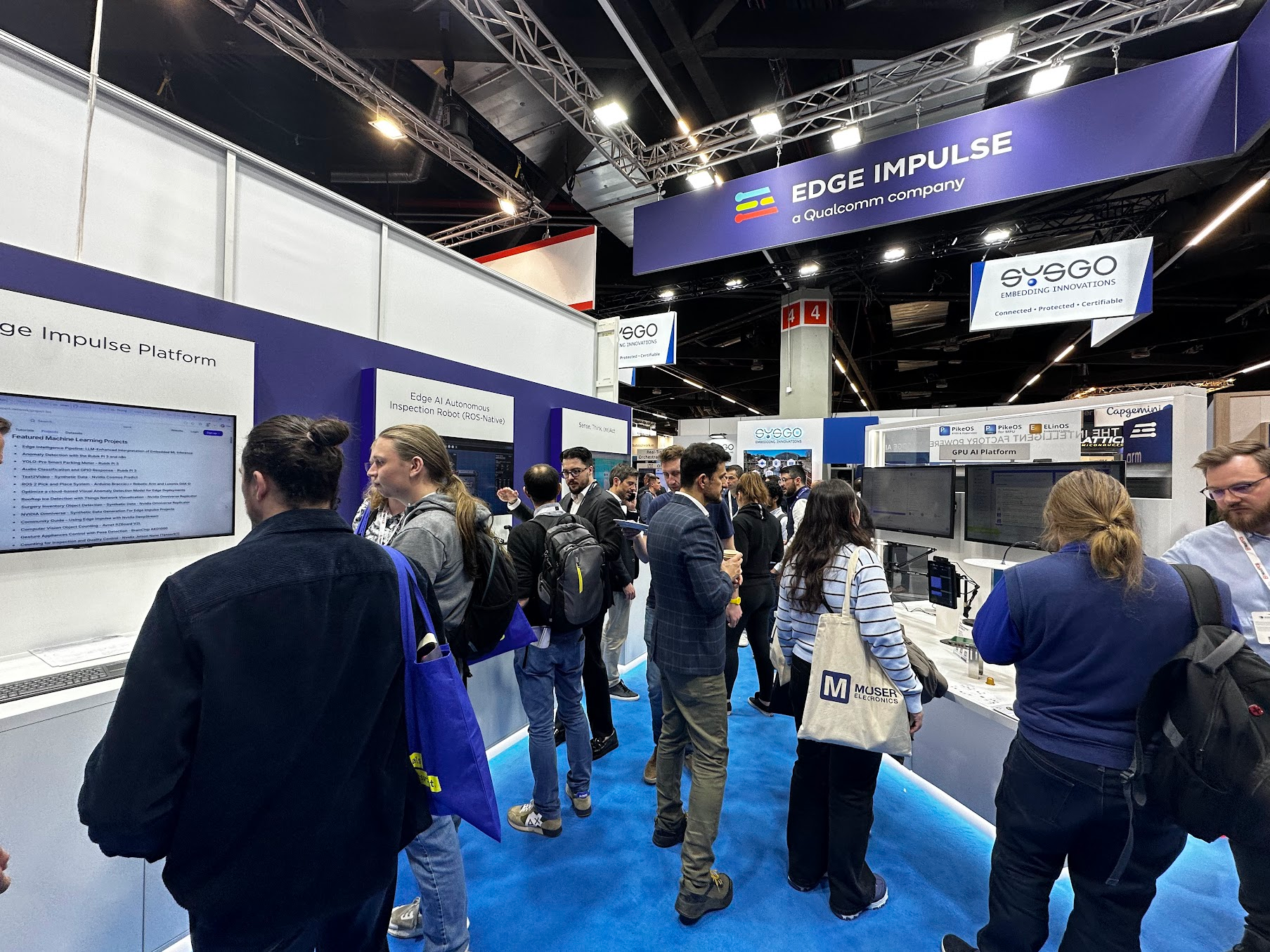

This is where platforms like Edge Impulse play a key role: bridging the gap between data collection, model training, and deployment.

Final thoughts

embedded world 2026 made one thing very clear: We’re moving from connected devices to intelligent devices. The shift to edge AI is as much about performance as it is about enabling systems that can operate independently, make decisions locally, and interact with the physical world in real time. And if this year is any indication, the pace of that transition is only accelerating.

I’m already excited to see what 2027 brings.

Ready to start building your own edge AI applications? Sign up for your free developer account to train and deploy your first machine learning model today!