A persistent cough often accompanies respiratory illnesses, but tracking its frequency and severity accurately over extended periods has traditionally relied on subjective assessments from healthcare providers or patient self-reporting. Technology startup Hyfe is addressing this with an innovative approach that embeds microphones in wearable devices to detect and classify coughs automatically.

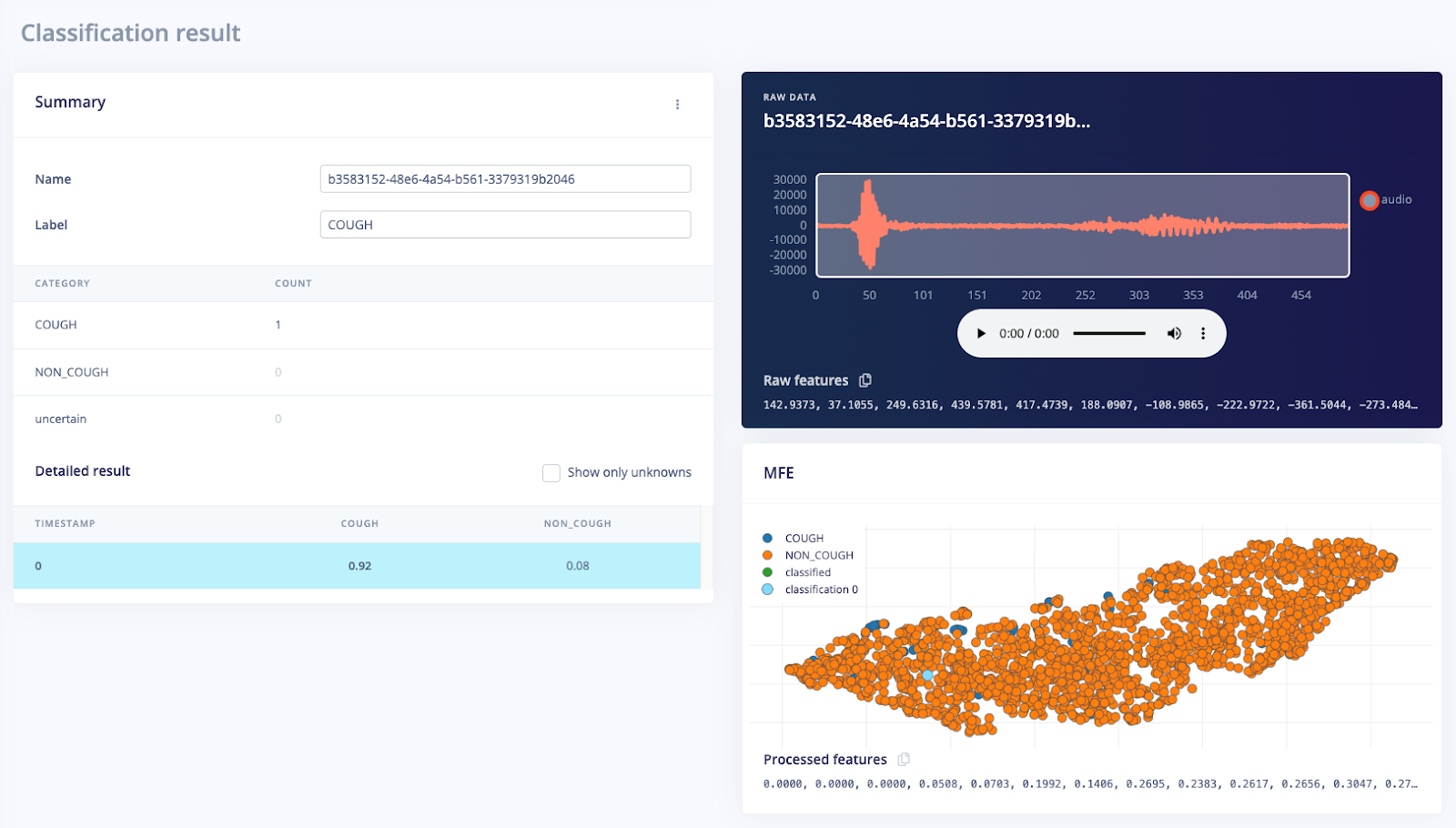

When it came time to develop its neural cough classifier, Hyfe chose to use the leading edge AI development tool, Edge Impulse.

“…Edge Impulse follows a philosophy of being customizable and adaptable by machine learning specialists who can contribute their expertise through techniques such as hand-crafted model architectures and loss functions, and customized operator kernels.” —Towards a Neural Cough Classifier for Edge Devices, Hyfe

Building the Dataset

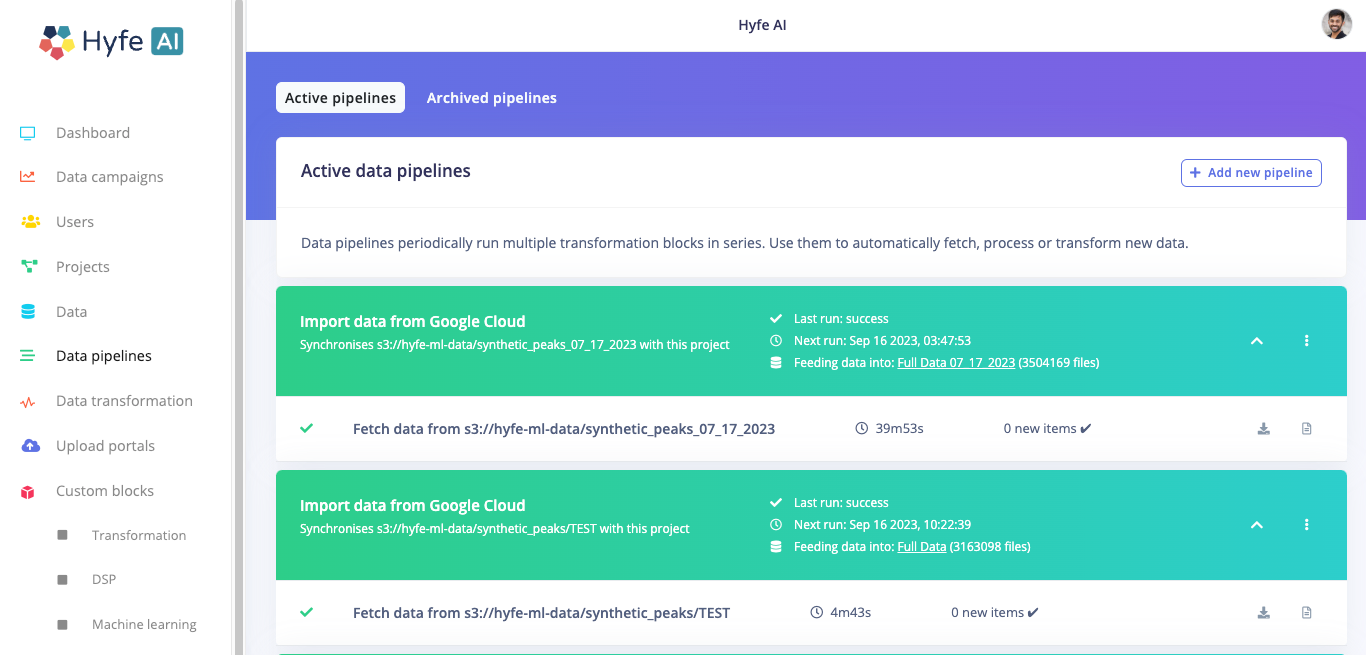

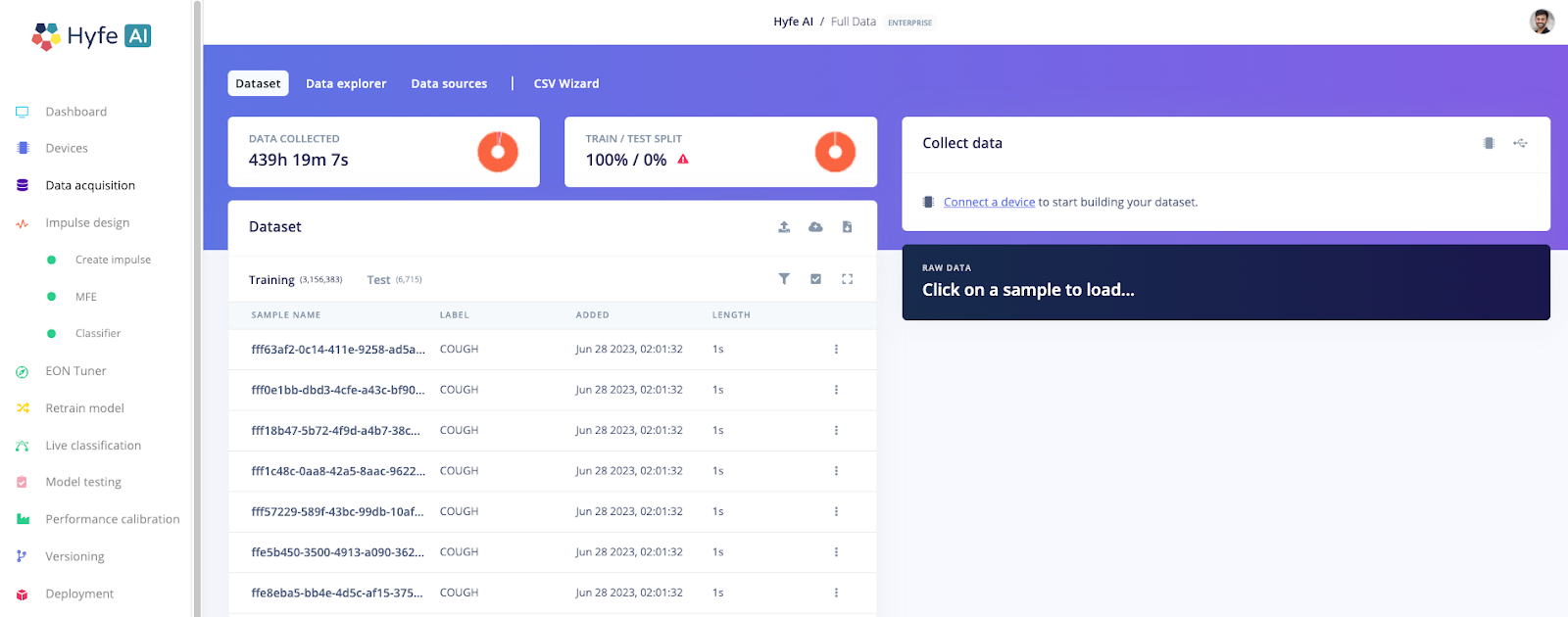

Hyfe has a dataset consisting of millions of cough and non-cough audio samples. The full dataset was uploaded to the Edge Impulse (EI) platform and downsampled from 44.1 kHz to 16 kHz, encoded in 16-bit PCM files. This was done through an Enterprise feature called data pipelines. Enterprise users can set up automated data pipelines to consistently check if new data needs to be fetched from their bucket in the cloud. In this case, Hyfe kept their dataset on Google Cloud storage.

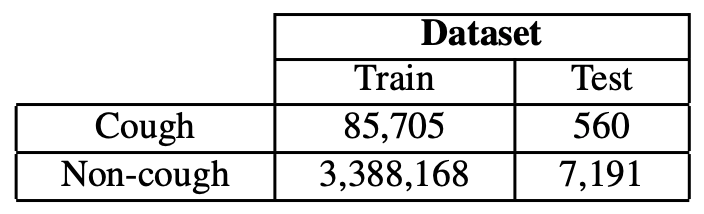

The dataset was divided into the following training and test sets:

Creating the Model

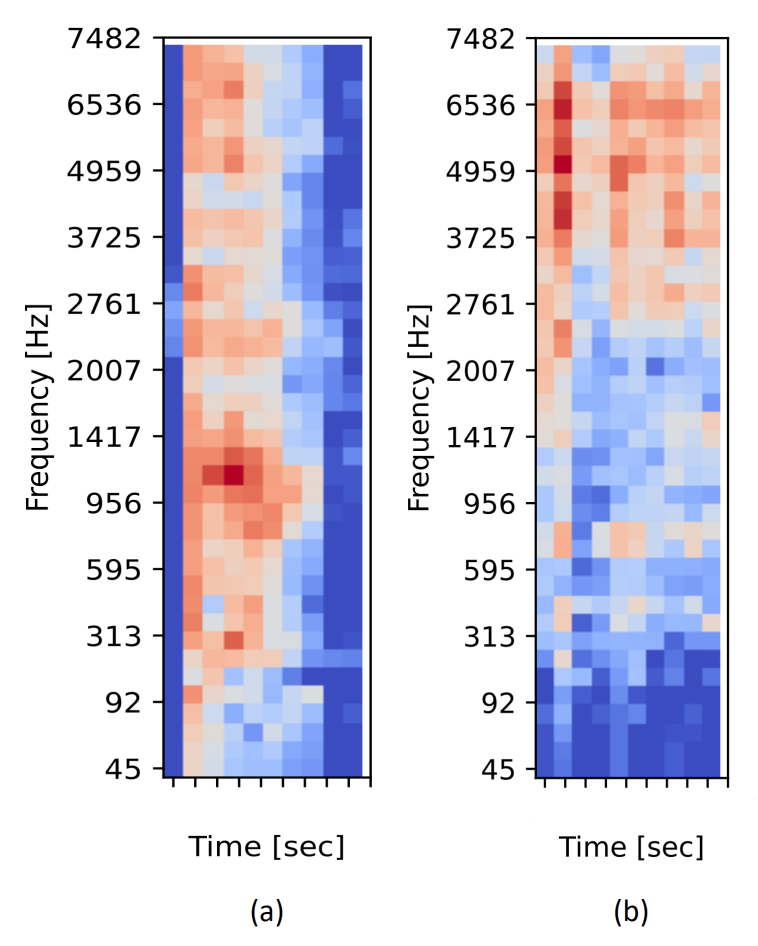

MFEs for Acoustic Representation

Hyfe selected Mel-Filterbank Energies (MFEs) as they model the spectral energy distribution in a way that aligns with human auditory perception. MFEs are designed to capture the non-linear sensitivity of the human auditory system by distributing frequency bands according to the Mel-scale.

Neural Network Models

To develop the cough detection system, Hyfe experimented with three types of neural network models:

Alison-based Models: These models relied on 2D-convolutional layers with architecture similar to Alison, Hyfe’s production model.

2D-CNNs: These models also used 2D convolutional layers but did not strictly adhere to Alison’s architecture.

Fully Connected Deep Neural Networks: These models consisted of fully connected layers with dropout layers in-between.

Performance Metrics:

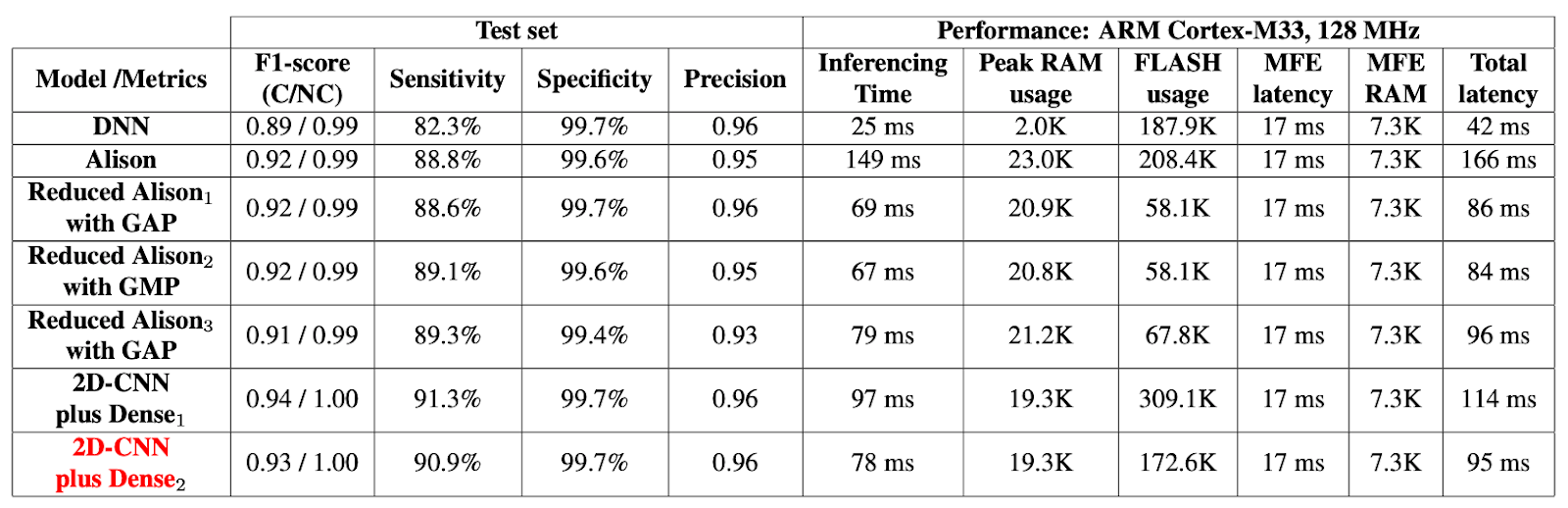

Important metrics to compare models include model performance, inference time, peak RAM usage, and FLASH usage. All of these metrics were provided by the Edge Impulse platform, allowing Hyfe to compare models easily without expensive and time-consuming testing.

Model Performance: Confusion matrices, accuracy, precision and recall for each class.

Inference Time: The time taken to process and make predictions on audio data.

Peak RAM Usage: The maximum amount of memory required during inference.

FLASH Usage: The storage space needed for the model.

Achieving Real Time Cough Detection on an Embedded Target

The primary challenge in real-time cough detection on embedded devices is finding the right balance between model accuracy and the limitations imposed by the device’s computational resources. These limitations include restricted processing power and memory capacity. Achieving real-time performance under these constraints while maintaining adequate accuracy is crucial for practical applications. Hyfe was able to create multiple models and perform multiple tests thanks to Edge Impulse’s platform. With the training jobs powered by Edge Impulse GPU infrastructure, Hyfe was able to collect data in the following table in a short period of time.

Conclusion

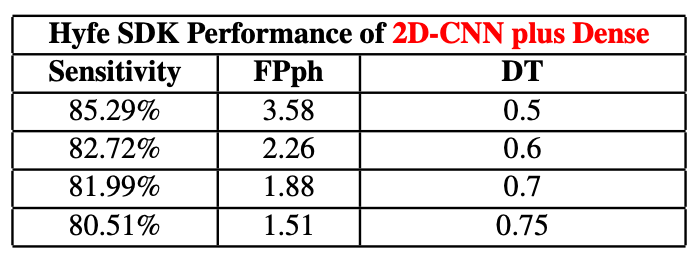

By leveraging the Edge Impulse platform and carefully selecting a model architecture, it was possible to achieve an impressive sensitivity of 90.9% and specificity of 99.7% while maintaining low inference times and a small memory footprint. Thanks to the model performance, inference time, peak RAM usage, and flash usage metrics provided by Edge Impulse for each model, Hyfe was able to quickly iterate through different models. Even with over 3.5 million samples of audio data, the platform was able to build MFE features, train the model, and provide a deployment file efficiently.

The study is only the beginning and has only opened the door to enhanced cough detection capabilities in embedded applications. Testing on new hardware targets other than the ARM Cortex-M33 or even trying out different digital signal processing or machine learning blocks are all possible with the Edge Impulse platform.

Hero image: Photo by Towfiqu barbhuiya on Unsplash