There are roughly 50 billion pieces of litter alongside roadways and waterways in the United States, according to the Keep America Beautiful organization. This equates to 152 pieces of litter for each and every resident of the country. This is not exactly a popular state of affairs, with a full ninety percent of people surveyed believing litter to be a problem in their state. Aside from the obvious undesirable appearance of a landscape marred by trash, litter also causes water, soil, and air pollution as it degrades and releases chemicals. Further, millions of animals are killed by entrapment in, or ingestion of, improperly discarded waste.

Slogans about not being a litterbug, or giving a hoot and not polluting are fine and all, but given the statistics we have, are clearly not really solving the problem. And with where the matter currently stands, sending out enough people to track down and recover all of the waste already out there is highly impractical. A better solution is needed, and thanks to roboticist and machine learning enthusiast Kutluhan Aktar, we may be a bit closer to that solution. Aktar has developed an autonomous litter detection robot that can cruise around, find areas with lots of waste items, and report those findings back to an operator.

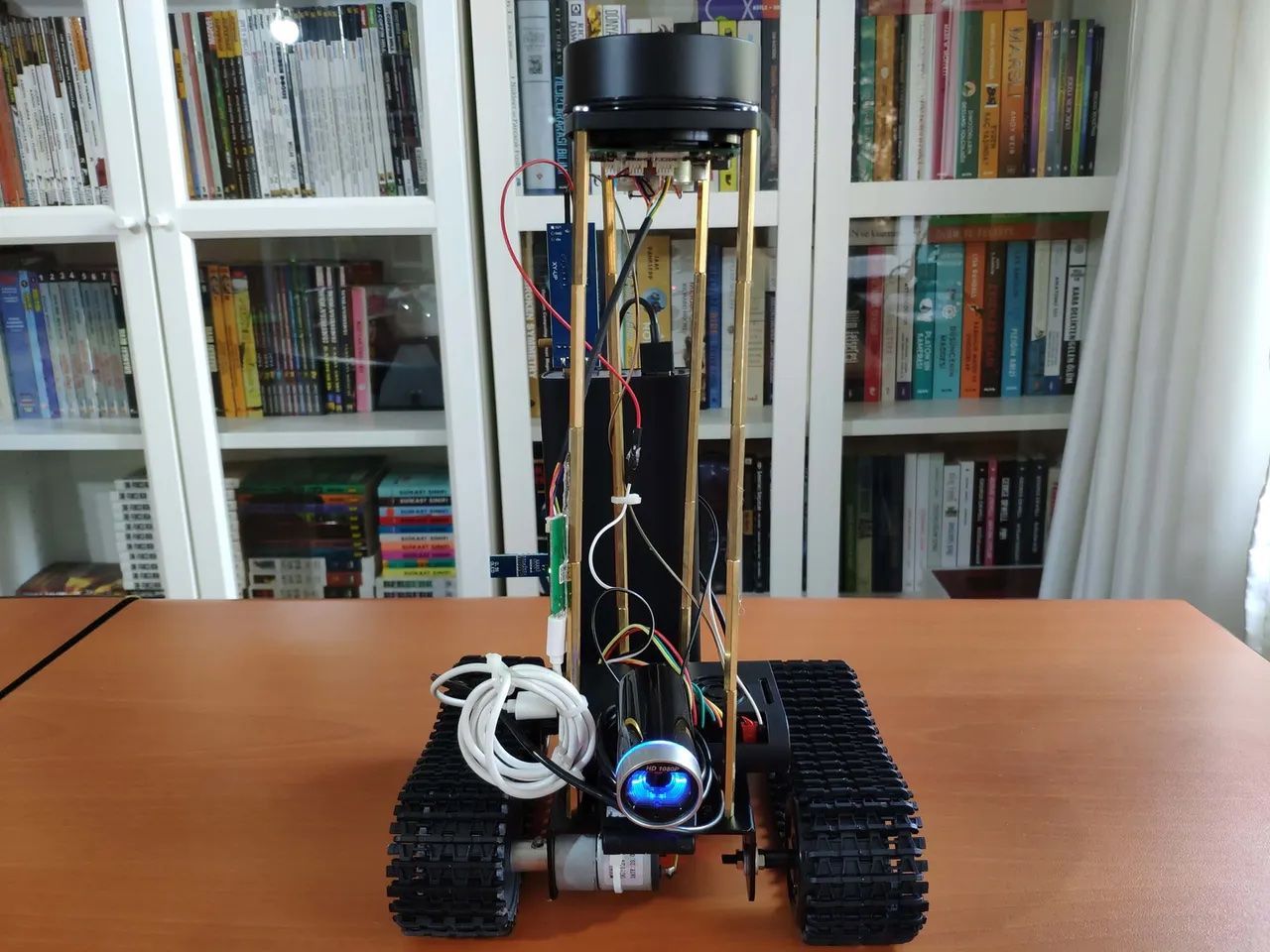

The prototype is built on top of a tank-like DFRobot Black Gladiator tracked robot chassis, with an L298N motor driver module to control its DC motors. The robot was given sight with an RPLIDAR A1M8 360 Degree Laser Scanner and a USB webcam. A Raspberry Pi 4 computer provides the processing power for the device, and controls the motors and laser scanner via its GPIO pins. An Arduino Nano board and six-axis accelerometer were also added to the build to serve as a fall detector for the robot, just in case it runs into any trouble in the field.

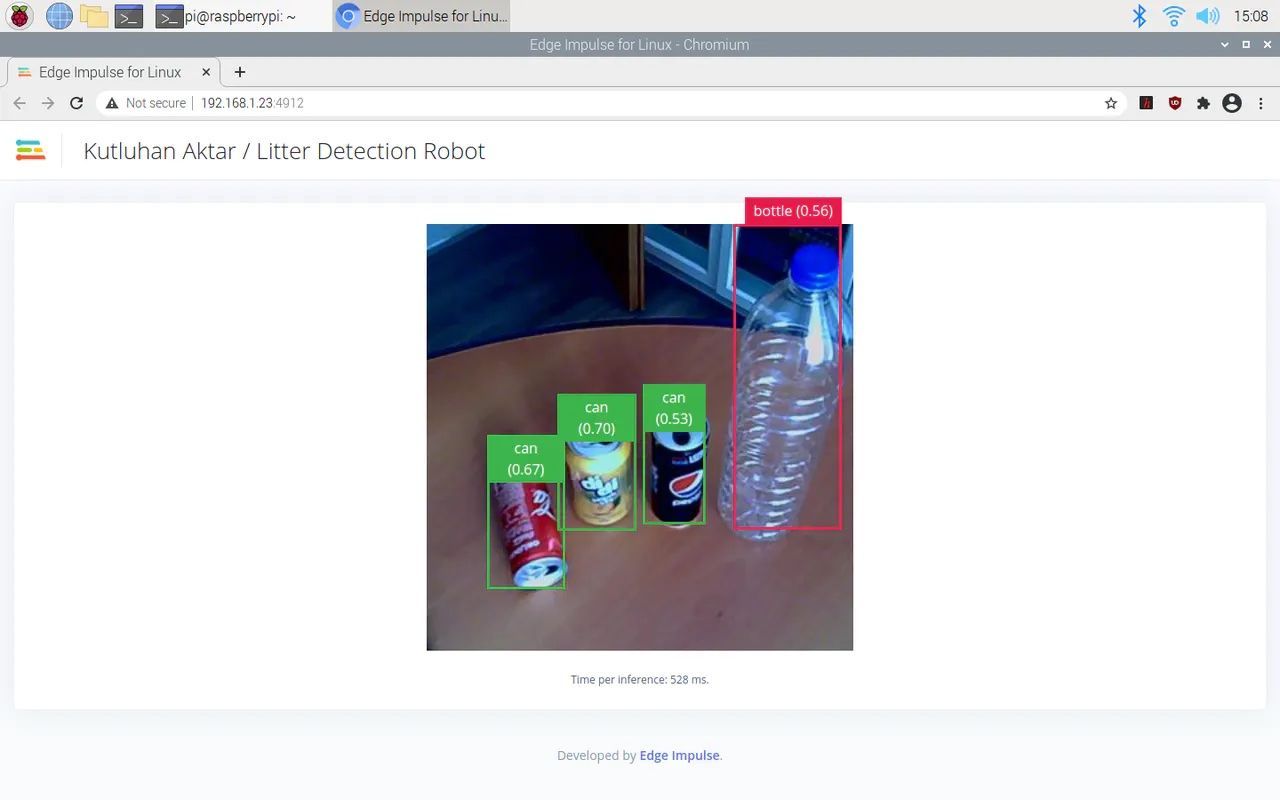

To make the robot perform some useful tasks, Aktar got to work building a neural network that can classify different kinds of trash with the help of Edge Impulse. The first step in this process was to locate some sample images to train the machine learning model. Some publicly available datasets were chosen, and example images of bottles, cans, and packaging made of various materials were extracted. Only 100 examples of each class were chosen, which is normally insufficient to train an image classifier, but Aktar found it was enough using transfer learning in Edge Impulse.

After uploading the dataset and labeling the images, the rest of the impulse was created. This impulse preprocesses input data by converting images to grayscale, then creates a features array to optimize training and inference performance. Just a few more clicks were needed to add a transfer learning block for the neural network classifier. With the data processing pipeline finalized, the model was trained on the training dataset. Running the trained model against the test dataset, classification accuracy was found to be at 84.11%, which is quite impressive given the small amount of training data supplied.

Deploying the model to run locally on the robot’s Raspberry Pi 4 was a simple process, requiring only a few commands to be executed in the terminal to connect to Edge Impulse and transfer the relevant files. From there Aktar developed some Python scripts to control the robot. The laser scanner is used to guide the robot around its environment, and help it to avoid obstacles. As it travels around, it captures images with the webcam, which are classified by the neural network. An operator can remotely get a view of what the robot sees, as well as any classifications that it makes, by loading a simple web application.

With a bit more refinement, it is easy to imagine an army of robots scouting out large collections of waste, allowing for targeted collection efforts. To see all the details of this great project, be sure to check out Aktar’s full write-up.

Want to see Edge Impulse in action? Schedule a demo today.