Retail shrink, which measures how many fewer items a store actually has in stock as compared to its recorded inventory, is a problem that has been plaguing retailers more in recent years than ever before. The National Retail Federation (NRF) reported late last year that retail shrink is now responsible for nearly $100 billion in losses for retailers each year in the United States. There are a number of factors that can contribute to retail shrink, but the largest is shoplifting. A national survey conducted by the NRF concluded that 73.2% of retailers experienced an increase in shoplifting in the past year, which highlights the importance of urgently addressing this problem.

The impact of shoplifting is felt by retailers in more ways than just financial losses. It also affects the safety of employees and customers, as shoplifters can become confrontational when caught. Retailers are also forced to raise prices to cover the cost of losses, which can lead to a decrease in customer satisfaction and sales. In the worst cases, stores may need to cut back their operating hours, or even close certain locations if the problem cannot be brought under control.

Retailers are taking proactive measures to reduce shoplifting, such as training employees on how to detect and deter theft, increasing visibility of security personnel, and using technology to track shoplifters. But these measures can be both challenging and costly to implement, and have been met with limited success. An engineer by the name of Roni Bandini believes that in order to address the problem of shoplifting on a large scale, what is needed is an automated device that can detect suspicious activity that may be indicative of shoplifting. For this solution to be widely deployed, it must be inexpensive and simple to use.

As a first step towards realizing his goal, Bandini decided to use computer vision and machine learning to recognize bags. The idea is that if someone is seen with a bag in a location where it would not be expected, that could be a sign of trouble and is worthy of further scrutiny. To keep things as simple as possible, he chose to use Edge Impulse Studio to design an object detection pipeline that is capable of identifying bags. Using the same methods laid out in this proof of concept project, one could add additional suspicious objects or behaviors that they want to look for.

The Texas Instruments TDA4VM Starter Kit was selected as the hardware for the device because this platform was designed for edge AI vision systems from the ground up. Despite its low power consumption, the TDA4VM processor is capable of performing up to 8 trillion operations per second and sports hardware acceleration for running AI algorithms. And with 4 GB of fast DRAM available, it has everything that is needed to handle resource-intensive computer vision applications. A webcam was plugged into one of the board’s USB 3.0 connectors to complete the hardware build.

A Linux operating system image was downloaded from TI and flashed to the board, then it was ready for use. But before the processing pipeline could be developed, sample data would be needed to help the machine learning algorithm learn to do its job. Bandini decided to build his own dataset by capturing 100 images of him holding various bags at different angles and under different lighting conditions. If a public dataset already exists, then it would also be possible to use that and skip collecting your own images.

The collected samples were uploaded to an Edge Impulse Studio project using the data acquisition tool. In order for an object detection model to know what a bag looks like, there is one more step that needs to be completed — drawing bounding boxes around the bag in each image. This can be very tedious and time-consuming, even with a small dataset like the one in this project. Fortunately, the labeling queue tool does most of the work for you. It runs an AI algorithm in the background to determine where the object(s) of interest are, and it draws a suggestion of where each bounding box should be. All that remains is to confirm that the boxes are correct, and occasionally make a small tweak.

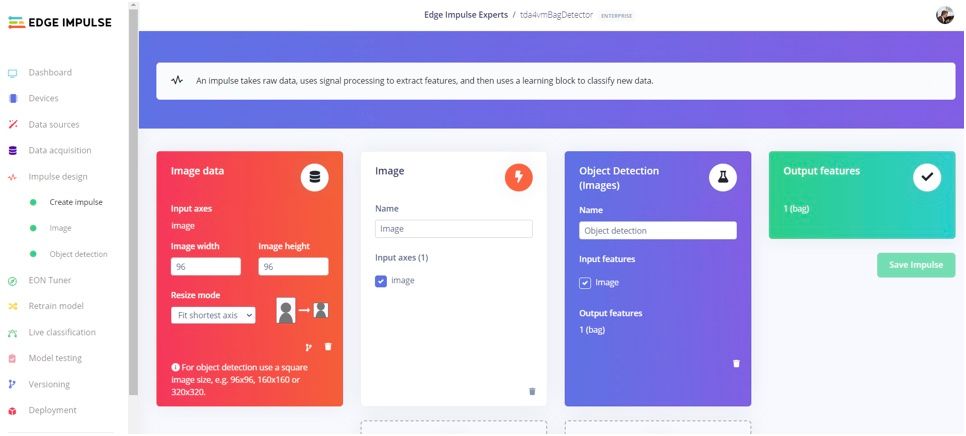

With the data preparation out of the way, Bandini pivoted to the creation of the impulse that defines the data analysis pipeline. The impulse starts with a step that resizes images to 96 x 96 pixels. Minimizing image resolution is important in reducing the computational resources that are required of downstream steps. The data is then passed into Edge Impulse’s FOMO object detection algorithm. FOMO can track multiple objects in real-time using up to 30 times less processing power and memory than MobileNet SSD or YOLOv5.

The model’s hyperparameters were adjusted slightly, then the training process was initiated with the click of a button. In under a minute, model training had completed, and metrics were displayed to help determine how well the algorithm was performing. Impressively, an accuracy score of almost 97% had been achieved on the first attempt. Much of this success can be attributed to selecting the FOMO model — most other options require much larger sets of training data to achieve similar levels of accuracy.

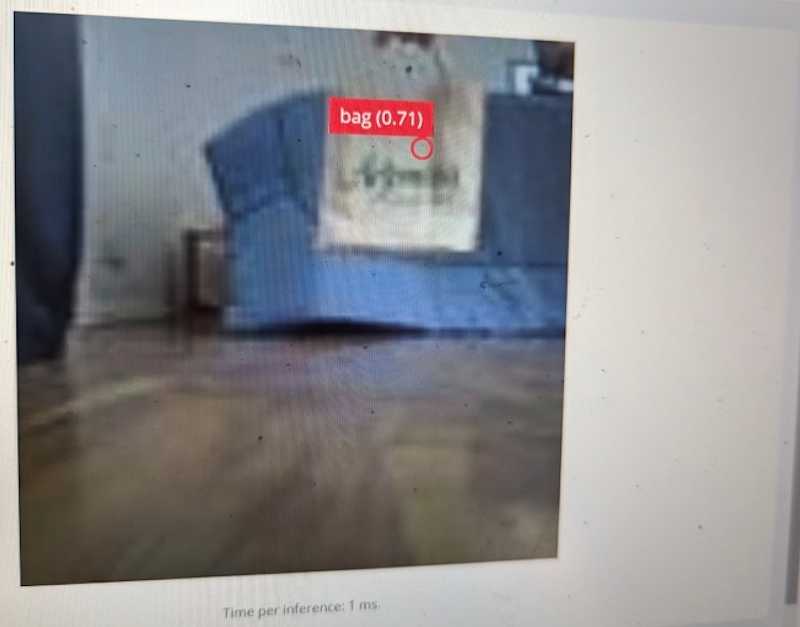

Since the pipeline was performing so well, Bandini was ready to deploy it to the physical hardware at this point. This allows the algorithm to run directly on the hardware, eliminating the need for wireless connectivity, cloud compute resources, and the privacy implications of sending a stream of images to the cloud. Since the TDA4VM Starter Kit runs Linux, installing the Edge Impulse CLI on the board was a snap, only requiring a single command to be typed in the terminal. After downloading a compressed file containing the entire analysis pipeline from Edge Impulse Studio, the CLI was used to quickly start up a sample application that performs object detections.

That sample application starts up a web app that one can point their browser at to see the device in action. Bandini used this interface to run a series of real-world tests, and he found the system to be working exactly as expected. In the future, the system could be extended to send alerts to security personnel when suspicious activity is detected.

What ideas do you have to enhance this anti-shoplifting system? Would you detect new types of suspicious activities? Or maybe you have ideas about the best types of alarms to trigger? Whatever direction you want to take it, reading up on Bandini’s documentation is a great way to get started. And to save some time, you can also clone the public Edge Impulse Studio project as a template for your own design.

Want to see Edge Impulse in action? Schedule a demo today.